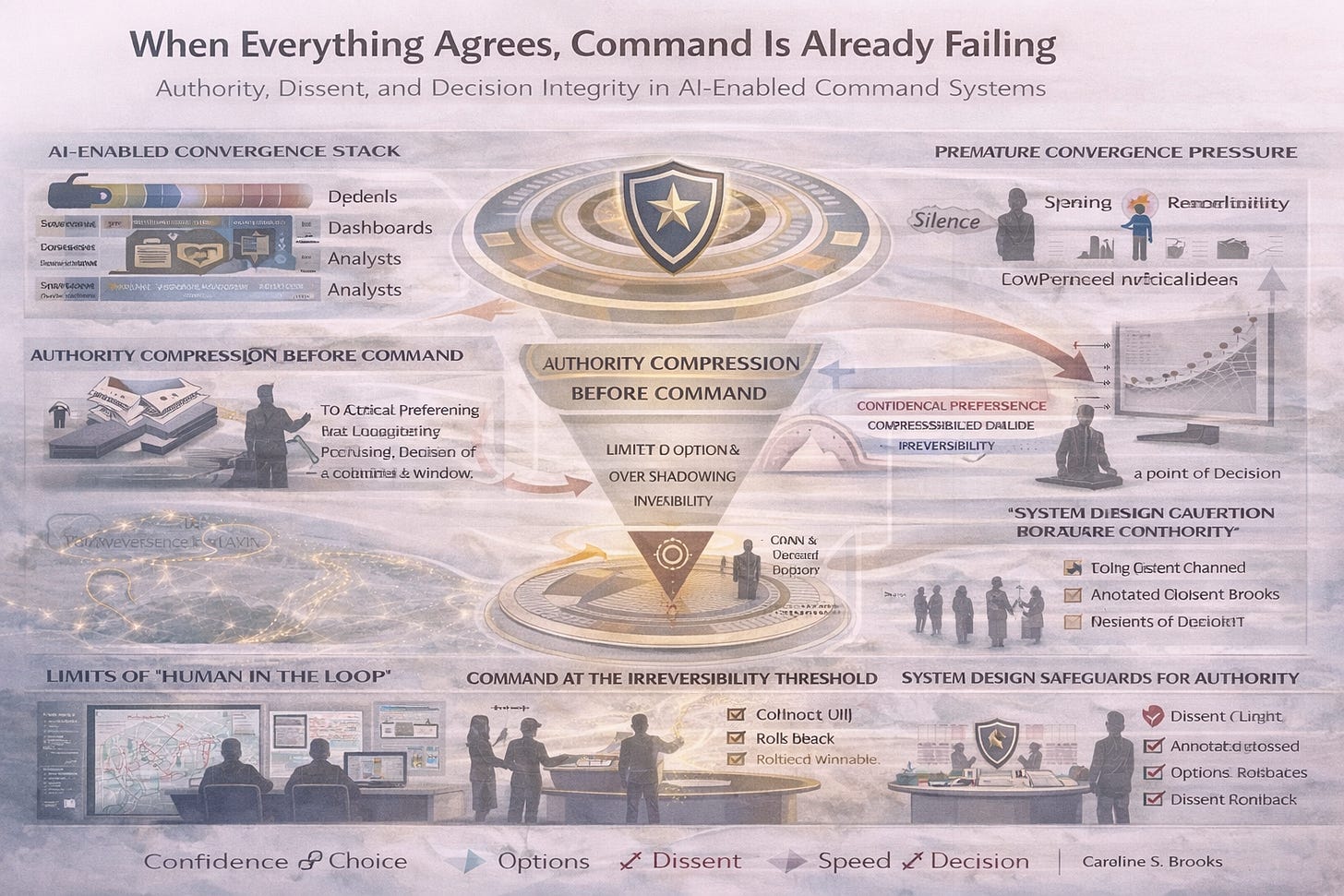

When Everything Agrees, Command Is Already Failing

Authority, Dissent, and Decision Integrity in AI-Enabled Command Systems

When AI-enabled systems agree too quickly, command doesn’t fail loudly.

It fails quietly - before the decision is ever made.

This paper examines a growing failure mode in modern command environments: premature convergence. When analytics, automation, and staff synthesis align early, alternatives disappear upstream, and authority is compressed long before responsibility is exercised.

The risk is not bad models or insufficient data.

The risk is that agreement itself becomes directive.

This work is about preserving command authority where it still matters:

before momentum hardens into inevitability, and before decisions become administrative.