Meaning Architecture and Responsible AI: Complementary Layers of Command Risk

Responsible AI is necessary - but it doesn’t govern everything that can break command.

As AI-enabled systems increasingly shape how information is filtered, framed, and prioritized in operations, a different class of risk is emerging - one that Responsible AI frameworks don’t directly address.

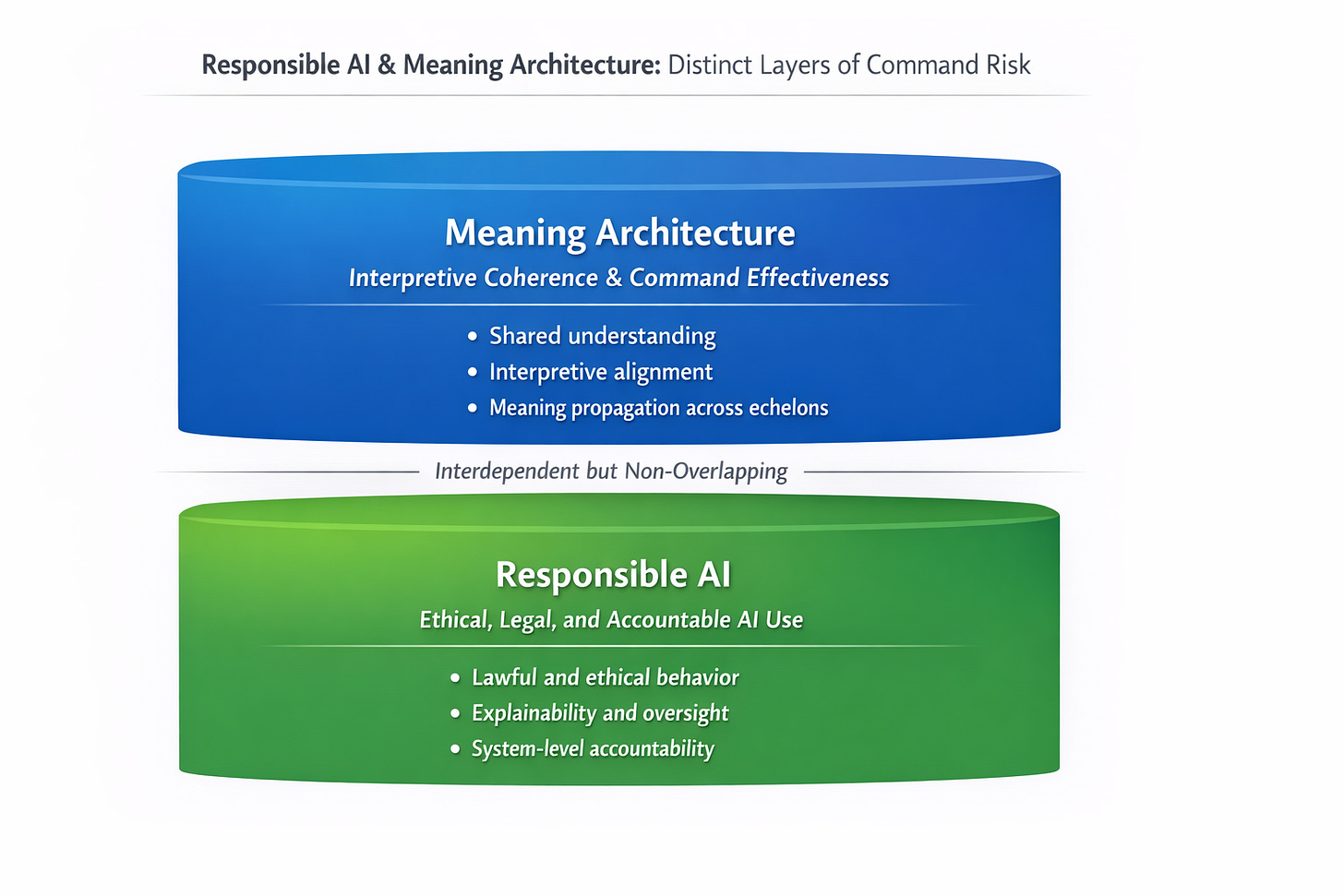

Responsible AI governs whether systems are lawful, ethical, explainable, and accountable.

Meaning Architecture asks a different question: is the force operating from the same understanding of reality?

This short memo clarifies how these two approaches operate at distinct but complementary layers of command risk. Even when AI systems are compliant and functioning as designed, interpretive dynamics can quietly fracture shared understanding, displace human judgment, and degrade command effectiveness.

This isn’t a critique of Responsible AI. It’s an attempt to name a separate command problem - one that sits upstream of ethics and compliance, and directly inside mission command.

If command depends on shared meaning, we should be explicit about how that meaning is formed - and how it can fail.